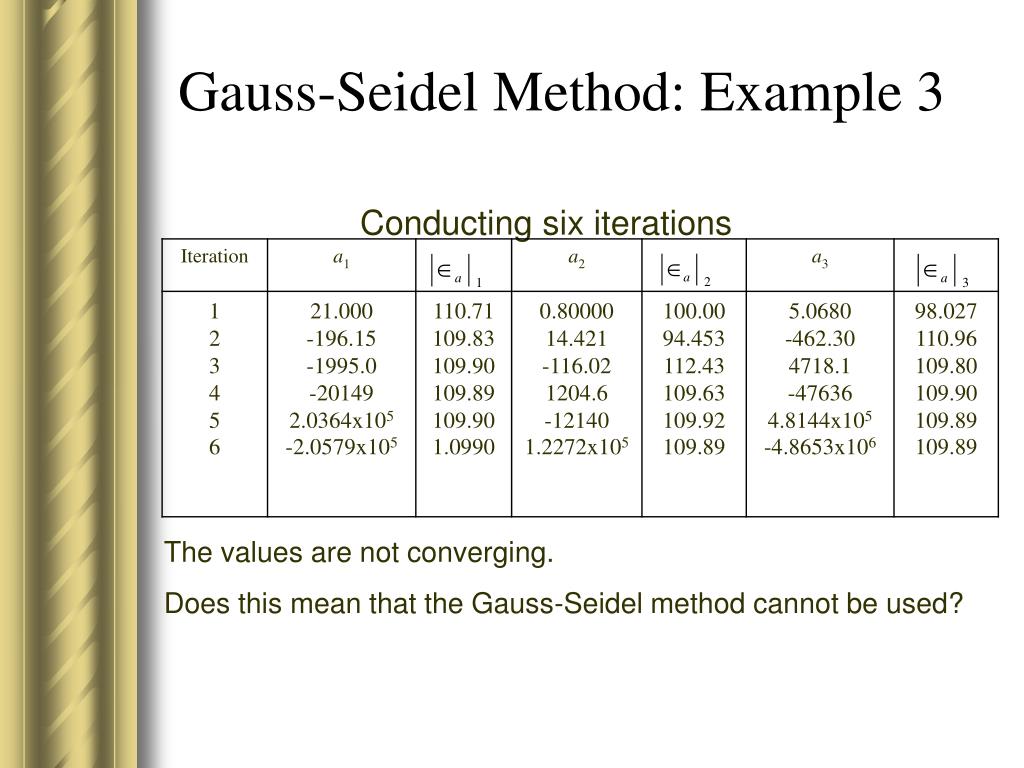

Decomposition: as CUDA architecture favours fine grain parallelization, the computational unit is defined as all the iterations a CUDA thread needs to perform in order to achieve a value delta smaller than the threshold.Ģ. However, this is totally fine, as the speed up that is achieved by parallelizing the calculations, is way higher than the cost of more iterations. This constraint relaxation will only mean that the solver function will take longer iterations to reach the predefined threshold. In order to parallelize the calculations, ALL data dependency constraints need to be omitted. How does it work? General considerations: The goal of this projet was to provide a faster resolution time than the sequential version of the code, which is also provided in the repository.

The Gauss Seidel method for solving linear equations is an iterative method, in which the values for the given variables keep changing until a certain threshold of variance is reached. This small project contains the implementation of the Gauss Seidel linear equations solver, using NVIDIA CUDA for parallelizing computations using a GPU.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed